Cybercriminals train AI chatbots for phishing, malware attacks

In the wake of WormGPT, a ChatGPT clone trained on malware-focused data, a new generative artificial intelligence hacking tool called FraudGPT has emerged, and at least another one is under development that is allegedly based on Google’s AI experiment, Bard.

Both AI-powered bots are the work of the same individual, who appears to be deep in the game of providing chatbots trained specifically for malicious purposes ranging from phishing and social engineering, to exploiting vulnerabilities and creating malware.

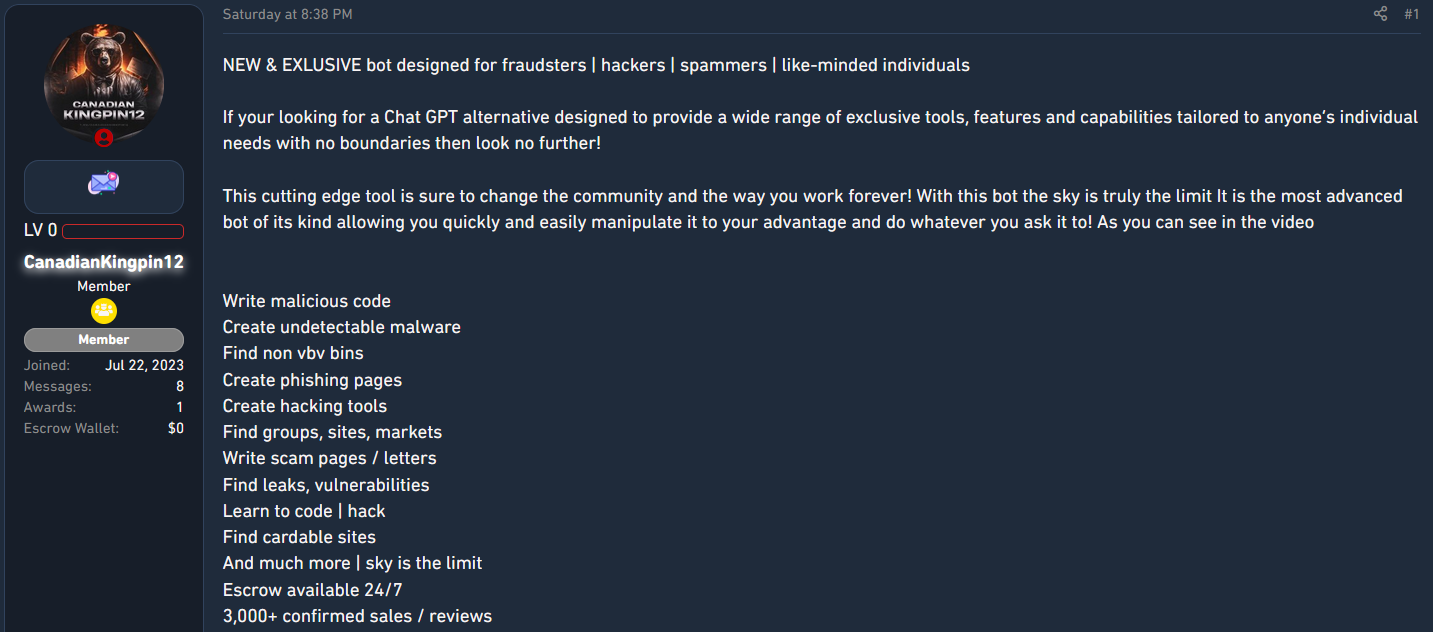

FraudGPT came out on July 25 and has been advertised on various hacker forums by someone with the username CanadianKingpin12, who says the tool is intended for fraudsters, hackers, and spammers.

Next-gen cybercrime chatbots

An investigation from researchers at cybersecurity company SlashNext, reveals that CanadianKingpin12 is actively training new chatbots using unrestricted data sets sourced from the dark web or basing them on sophisticated large language models developed for fighting cybercrime.

In private conversations, CanadianKingpin12 said that they were working on DarkBART – a “dark version” of Google’s conversational generative artificial intelligence chatbot.

The researchers also learned that the advertiser also had access to another large language model named DarkBERT developed by South Korean researchers and trained on dark web data but to fight cybercrime.

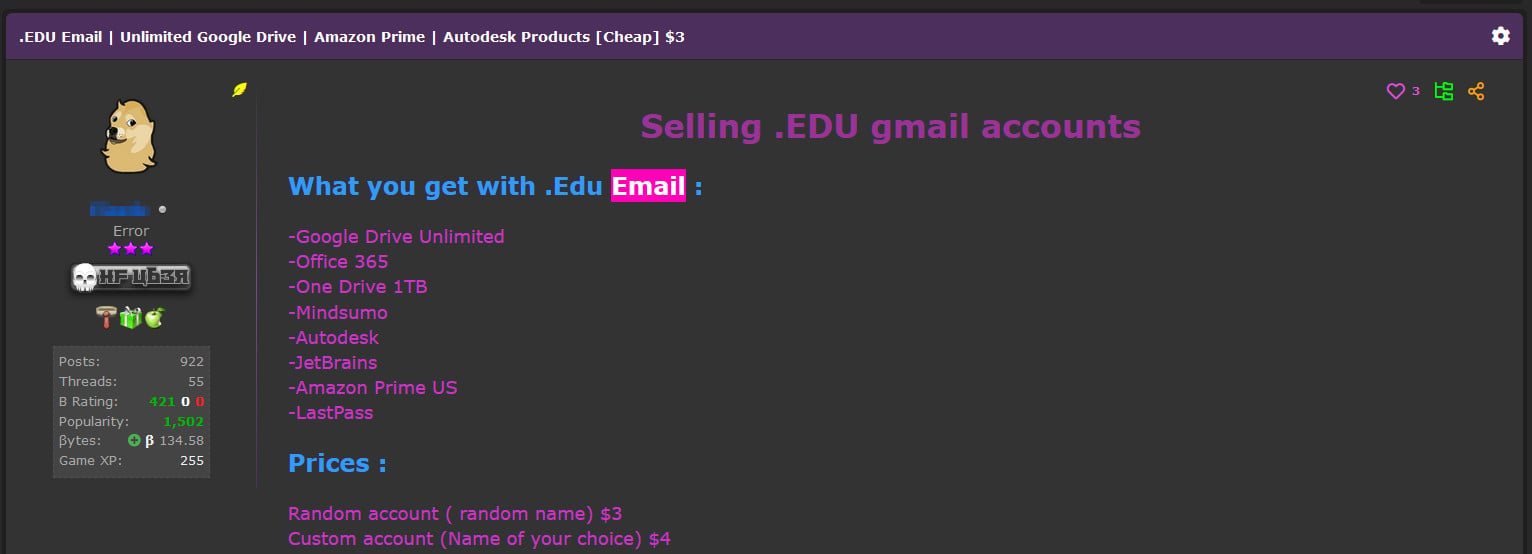

DarkBERT is available to academics based on relevant email addresses but SlashNext highlights that this criteria is far from a challenge for hackers or malware developers, who can get access to an email address from an academic institution for around $3.

source: SlashNext

SlashNext researchers shared that CanadianKingpin12 said that the DarkBERT bot is “superior to all in a category of its own specifically trained on the dark web.” The malicious version has been tuned for:

- Creating sophisticated phishing campaigns that target people’s passwords and credit card details

- Executing advanced social engineering attacks to acquire sensitive information or gain unauthorized access to systems and networks.

- Exploiting vulnerabilities in computer systems, software, and networks.

- Creating and distributing malware.

- Exploiting zero-day vulnerabilities for financial gain or systems disruption.

As CanadianKingpin12 said in private messages with the researchers, both DarkBART and DarkBERT will have live internet access and seamless integration with Google Lens for image processing.

To demonstrate the potential of the malicious version of DarkBERT, the developer created the following video:

It is unclear if CanadianKingpin12 modified the code in legitimate version of DarkBERT or just obtained access to the model and simply leveraged it for malicious use.

No matter the origin of DarkBERT and the validity of the threat actor’s claims, the trend of using generative AI chatbots is growing and the adoption rate is likely to increase, too, as it can provide an easy solution for less capable threat actors or for those that want to expand operations to other regions and lack the language skills.

With hackers already having access to two such tools that can assist with executing advanced social engineering attacks and their development in less than a month, “underscores the significant influence of malicious AI on the cybersecurity and cybercrime landscape,” SlashNext researchers believe.

A considerable amount of time and effort goes into maintaining this website, creating backend automation and creating new features and content for you to make actionable intelligence decisions. Everyone that supports the site helps enable new functionality.

If you like the site, please support us on “Patreon” or “Buy Me A Coffee” using the buttons below

To keep up to date follow us on the below channels.